agentscope-ai / Trinity-RFT

Trinity-RFT is a general-purpose, flexible and scalable framework designed for reinforcement fine-tuning (RFT) of large language models (LLM).

📥 Clone

https://github.com/agentscope-ai/Trinity-RFT.git

Trinity-RFT: A General-Purpose and Unified Framework for

Reinforcement Fine-Tuning of Large Language Models

💡 What is Trinity-RFT?

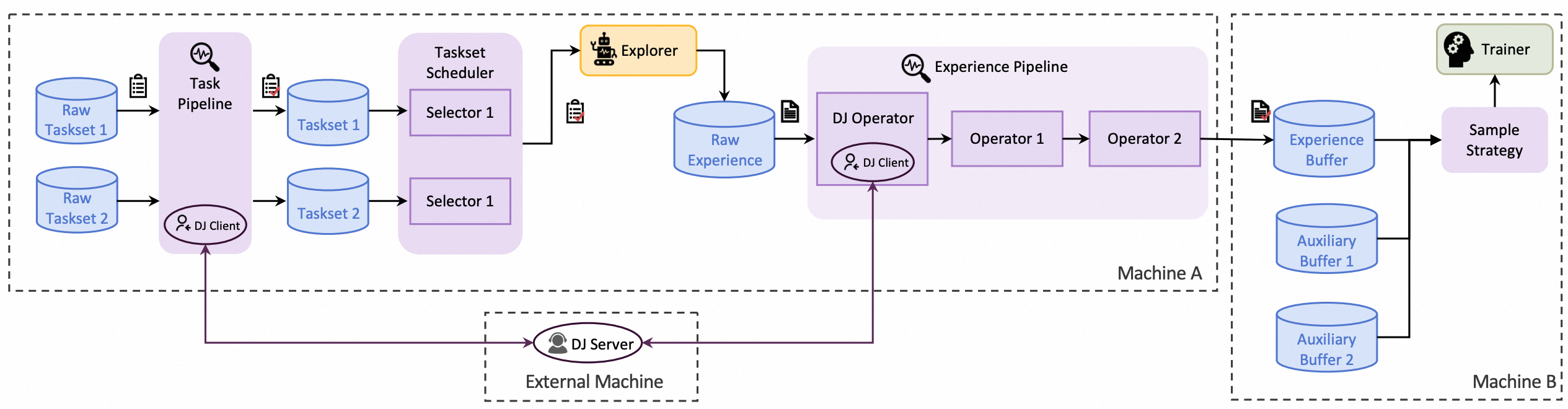

Trinity-RFT is a general-purpose, flexible and user-friendly framework for LLM reinforcement fine-tuning (RFT). It decouples RFT into three components that work in coordination:

- Explorer generates experience data via agent-environment interaction;

- Trainer updates model weights by minimizing losses on the data;

- Buffer pipelines data processing throughout the RFT lifecycle.

- 🤖 Agent application developers: Train LLM-powered agents and improve their capabilities in specific domains [[tutorial]](https://agentscope-ai.github.io/Trinity-RFT/en/main/tutorial/develop_workflow.html)

- 🧠 Reinforcement learning researchers: Design, implement and validate new RL algorithms using compact, plug-and-play modules that allow non-invasive customization [[tutorial]](https://agentscope-ai.github.io/Trinity-RFT/en/main/tutorial/develop_algorithm.html)

- 📊 Data engineers: Create RFT datasets and build data pipelines for cleaning, augmentation, and human-in-the-loop scenarios [[tutorial]](https://agentscope-ai.github.io/Trinity-RFT/en/main/tutorial/develop_operator.html)

🚀 News

- [2026-02] [[Release Notes]](https://github.com/agentscope-ai/Trinity-RFT/releases/tag/v0.5.1) Trinity-RFT v0.5.1 released: Enhanced VLM support, logging improvements, bug fixes.

- [2026-02] [[Release Notes]](https://github.com/agentscope-ai/Trinity-RFT/releases/tag/v0.5.0) Trinity-RFT v0.5.0 released: colocate mode for single-GPU scenarios, trainer driven weight synchronization, automatic parallelism setting suggestion, and more.

- [2026-01] 🎉 Three papers accepted by ICLR 2026: CHORD, BOTS, and Group-relative REINFORCE variants. Try out these new algorithms in Trinity-RFT!

- [2026-01] [[Release Notes]](https://github.com/agentscope-ai/Trinity-RFT/releases/tag/v0.4.1) Trinity-RFT v0.4.1 released: upgraded verl to v0.7.0, Tinker backend supports OpenAI API, bug fixes.

- [2026-01] Introducing R3L: a systematic reflect-then-retry RL mechanism with efficient language-guided exploration and stable off-policy learning (paper).

- [2025-12] [[Release Notes]](https://github.com/agentscope-ai/Trinity-RFT/releases/tag/v0.4.0) Trinity-RFT v0.4.0 released: added Tinker backend for users without GPUs, add more benchmarks, enhance online RL and more.

- [2025-12] Trinity-RFT powers the medical and health business of "Taobao Shangou", enabling the AI agent to understand vague symptoms, proactively ask follow-up questions, and provide precise recommendations (News).

- [2025-11] Introducing Learn-to-Ask: a framework for training proactive dialogue agents from offline expert data (paper).

- [2025-11] Introducing BOTS: online RL task selection for efficient LLM fine-tuning (paper).

- [2025-09] Our paper reveals a novel off-policy interpretation for group-relative REINFORCE and its variants like GRPO and AsymRE (implementation).

- [2025-08] Introducing CHORD: dynamic SFT + RL integration for advanced LLM fine-tuning (paper).

More...

- [2025-11] Trinity-RFT v0.3.3 released: bug fixes.

- [2025-11] Trinity-RFT v0.3.2 released: bug fixes and advanced task selection & scheduling.

- [2025-10] Trinity-RFT v0.3.1 released: multi-stage training support, improved agentic RL examples, LoRA support, debug mode and new RL algorithms.

- [2025-09] Trinity-RFT v0.3.0 released: enhanced Buffer, FSDP2 & Megatron support, multi-modal models, and new RL algorithms/examples.

- [2025-08] Trinity-RFT v0.2.1 released.

- [2025-07] Trinity-RFT v0.2.0 released.

- [2025-07] Technical report (arXiv v2) updated with new features, examples, and experiments: link.

- [2025-06] Trinity-RFT v0.1.1 released.

- [2025-05] Trinity-RFT v0.1.0 released, plus technical report.

- [2025-04] Trinity-RFT open sourced.

🔨 Tutorials and Guidelines

[!TIP]

Recommended Learning Paths

> 🆕 New users: Installation → Quick Start (GSM8K) → Configuration Guide → GPU Resource Guide

> 🔬 Algorithm researchers: Developer Guide → Algorithm Development Guide → CHORD Algorithm Example

> 🤖 Agent developers: Developer Guide → Workflow Development → General Multi-step Workflow Example

[!NOTE]

For more tutorials, please refer to the Trinity-RFT documentation.

🌟 Key Features

- Flexible RFT Modes:

- Supports synchronous/asynchronous, on-policy/off-policy, and online/offline RL.

- Rollout and training can run separately and scale independently across devices.

- Boost sample and time efficiency by experience replay.

- Agentic RL Support:

- Supports both concatenated and general multi-step agentic workflows.

- Able to directly train agent applications developed using agent frameworks like AgentScope.

- Full-Lifecycle Data Pipelines:

- Enables pipeline processing of rollout tasks and experience samples.

- Active data management (prioritization, cleaning, augmentation, etc.) throughout the RFT lifecycle.

- Native support for multi-task joint learning and online task curriculum construction.

- User-Friendly Design:

- Plug-and-play modules and decoupled architecture, facilitating easy adoption and development.

- Rich graphical user interfaces enable low-code usage.

🔧 Supported Algorithms

| Algorithm | Doc / Example | Source Code | Key Configurations |

|---|---|---|---|

| PPO [Paper] | [Doc] [Countdown Example] | [Code] | algorithm_type: ppo |

| GRPO [Paper] | [Doc] [GSM8K Example] | [Code] | algorithm_type: grpo |

| SFT | [Mixture-of-Thoughts Example] | [Code] | algorithm_type: sft |

| DPO [Paper] | [HumanLike Example] | [Code] | algorithm_type: dpo |

| CHORD 💡 [Paper] | [Doc] [ToolACE Example] | [Code] | algorithm_type: mix_chord |

| REC Series 💡 [Paper] | [GSM8K Example] | [Code] | algorithm_type: rec |

| RLOO [Paper] | - | [Code] | algorithm_type: rloo |

| REINFORCE++ [Paper] | - | [Code] | algorithm_type: reinforceplusplus |

| GSPO [Paper] | - | [Code] | algorithm_type: gspo |

| TOPR [Paper] | [GSM8K Example] | [Code] | algorithm_type: topr |

| sPPO [Paper] | [GSM8K Example] | [Code] | algorithm_type: sppo |

| AsymRE [Paper] | [GSM8K Example] | [Code] | algorithm_type: asymre |

| CISPO [Paper] | - | [Code] | algorithm_type: cispo |

| SAPO [Paper] | - | [Code] | algorithm_type: sapo |

| On-Policy Distillation [Blog] [Paper] | [GSM8K Example] | [Code] | algorithm_type: on_policy_distill |

| JSD (Jensen-Shannon Divergence) | [GSM8K Example] | [Code] | algorithm_type: jsd |

Table of Contents

Quick Start

[!NOTE]

This project is currently under active development. Comments and suggestions are welcome!

Minimal CPU-Only Quick Start

If you do not have access to a GPU, you can still try Trinity-RFT using the Tinker backend.

# Create and activate environment

python3.10 -m venv .venv

source .venv/bin/activate

# Install Trinity-RFT with CPU-only backend

pip install -e ".[tinker]"Run a simple example:

trinity run --config examples/tinker/tinker.yamlThis example is designed to run on CPU-only machines. See the complete Tinker training example for more details.

To run Trinity-RFT on GPU machines instead, please follow the steps below.

Step 1: Installation

Before installing, make sure your system meets the following requirements:

GPU Requirements

- Python: version 3.10 to 3.12 (inclusive)

- CUDA: version >= 12.8

- GPUs: At least one NVIDIA GPU with compute capability 8.0 or higher (e.g., RTX 30 series, A100, H100)

- If you have no GPU → Use Tinker backend

- If you want simple setup → Use Docker

- If you want development & contribution → Use Conda / venv

From Source (Recommended)

If you plan to customize or contribute to Trinity-RFT, this is the best option.

First, clone the repository:

git clone https://github.com/agentscope-ai/Trinity-RFT

cd Trinity-RFTThen, set up environment via one of the following options:

Using Pre-built Docker Image (Recommended for Beginners)

docker pull ghcr.io/agentscope-ai/trinity-rft:latest

# Run the container, replacing <path_to_your_data_and_checkpoints> with your actual path

docker run -it \

--gpus all \

--shm-size="64g" \

--rm \

-v $PWD:/workspace \

-v <path_to_your_data_and_checkpoints>:/data \

ghcr.io/agentscope-ai/trinity-rft:latestThis image has useduvto install all GPU-related dependencies of Trinity-RFT. The virtual environment will be automatically activated upon entering the container (you can also manually activate it viasource /opt/venv/bin/activateif needed). You can useuv pip installto add extra packages as necessary.

Using Conda

conda create -n trinity python=3.12

conda activate trinity

pip install -e ".[vllm,flash_attn]"

# If you have no GPU, comment out the line above and uncomment this instead:

# pip install -e ".[tinker]"

# If you encounter issues when installing flash-attn, try:

# pip install flash-attn==2.8.1 --no-build-isolation

pip install -e ".[dev]" # for development like linting and debuggingUsing venv

python3.10 -m venv .venv

source .venv/bin/activate

pip install -e ".[vllm,flash_attn]"

# If you have no GPU, comment out the line above and uncomment this instead:

# pip install -e ".[tinker]"

# If you encounter issues when installing flash-attn, try:

# pip install flash-attn==2.8.1 --no-build-isolation

pip install -e ".[dev]" # for development like linting and debuggingUsing uv

uv sync --extra vllm --extra dev --extra flash_attn

# If you have no GPU, try to use Tinker instead:

# uv sync --extra tinker --extra devVia PyPI

If you just want to use the package without modifying the code:

pip install trinity-rft

pip install flash-attn==2.8.1Or with uv:

uv pip install trinity-rft

uv pip install flash-attn==2.8.1For training with Megatron-LM, please refer to Megatron-LM Backend.

Step 2: prepare dataset and model

Trinity-RFT supports most datasets and models from Huggingface and ModelScope.

Prepare the model in the local directory $MODEL_PATH/{model_name}:

# Using Huggingface

huggingface-cli download {model_name} --local-dir $MODEL_PATH/{model_name}

# Using Modelscope

modelscope download {model_name} --local_dir $MODEL_PATH/{model_name}For more details about model downloading, see Huggingface or ModelScope.

Prepare the dataset in the local directory $DATASET_PATH/{dataset_name}:

# Using Huggingface

huggingface-cli download {dataset_name} --repo-type dataset --local-dir $DATASET_PATH/{dataset_name}

# Using Modelscope

modelscope download --dataset {dataset_name} --local_dir $DATASET_PATH/{dataset_name}Step 3: configurations

Trinity-RFT provides a web interface for configuring your RFT process.

[!NOTE]

This is an experimental feature, and we will continue to improve it.

To launch the web interface for minimal configurations, you can run

trinity studio --port 8080Then you can configure your RFT process in the web page and generate a config file. You can save the config file for later use or run it directly as described in the following section.

Advanced users can also edit the config file directly.

We provide example config files in examples.

For complete GUI features, please refer to the monorepo for Trinity-Studio.

Step 4: run the RFT process

Start a ray cluster:

# On master node

ray start --head

# On worker nodes

ray start --address=<master_address>(Optional) You may use Wandb / TensorBoard / MLFlow for better monitoring. Please refer to this documentation for the corresponding configurations. For example, to log in to Wandb:

export WANDB_API_KEY=<your_api_key>

wandb loginFor command-line users, run the RFT process:

trinity run --config <config_path>Example — fine-tuning Qwen2.5-1.5B-Instruct on GSM8k with GRPO:

trinity run --config examples/grpo_gsm8k/gsm8k.yamlFor studio users, click "Run" in the web interface.

Contribution Guide

This project is currently under active development--star the repo to watch releases for the latest updates!

We welcome all kinds of contributions from the community, including:

- Documentation improvements

- Example workflows, algorithms, and data pipelines

- Bug fixes and performance optimizations

See CONTRIBUTING.md for detailed contribution guidelines, as well as our good-first-issue list.

Acknowledgements

This project is built upon many excellent open-source projects, including:

- verl, FSDP and Megatron-LM for LLM training;

- vLLM for LLM inference;

- Data-Juicer for data processing pipelines;

- AgentScope for agentic workflow;

- Ray for distributed systems;

- we have also drawn inspirations from RL frameworks like OpenRLHF, TRL, ChatLearn and rLLM;

- ......

Citation

@misc{trinity-rft,

title={Trinity-RFT: A General-Purpose and Unified Framework for Reinforcement Fine-Tuning of Large Language Models},

author={Xuchen Pan and Yanxi Chen and Yushuo Chen and Yuchang Sun and Daoyuan Chen and Wenhao Zhang and Yuexiang Xie and Yilun Huang and Yilei Zhang and Dawei Gao and Yaliang Li and Bolin Ding and Jingren Zhou},

year={2025},

eprint={2505.17826},

archivePrefix={arXiv},

primaryClass={cs.LG},

url={https://arxiv.org/abs/2505.17826},

}